A Quick Little Guide to Troubleshooting R14 Issues On Heroku Before Restarting Dynos

So the idea for this came to be because I was getting an ActiveRecord::ConnectionTimeoutError: could not obtain a database connection within 5.000 seconds (waited 5.000 seconds) error reported via Airbrake regarding a Heroku application in production.

It was likely caused by a R14 Memory Quota Exceeded in Ruby error.

First do no harm

I looked up restarting heroku database on Google, and stumbled on this stackoverflow post.

This led me to the following tools which I want to document for my own purposes:

Check with these utilities from Heroku

So this little tool generates a mini-diagnostic report on your database. It gives you information about indexes, connections, blocking queries, and more. I suggest you check out the link for a bit more about what it does.

Some of the useful stuff it tells you about will help you identify low performing SQL queries, unused database indexes, and how many connections to the database you have.

The output looks something like:

GREEN: Connection Count

GREEN: Long Queries

GREEN: Idle in Transaction

GREEN: Indexes

GREEN: Bloat

GREEN: Hit Rate

GREEN: Blocking Queries

GREEN: Load

GREEN: SequencesIn my specific case, the Connection Count was green but I was still getting the ActiveRecord::ConnectionTimeoutError.

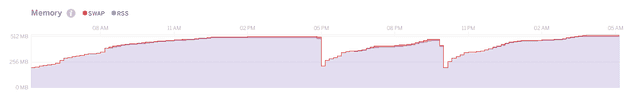

Then of course checking the Heroku metrics tab (mine looked similar to the image below) and seeing a bunch of R14 errors confirmed it.

heroku-pg-extras – A handy little tool that complements pg:diagnose with more information such as processes that are locking the database and queries showing outlier behavior.

If you need to do rolling restarts to bandage your memory leaks…

Here are 2 gems you might find helpful: